Learn how websites handle massive amounts of traffic

Millions of people use the internet every day. Each of these people will connect to a whole host of websites and services throughout their day; some websites handle millions of these connections on their own. The biggest websites on the internet, sites like Facebook and YouTube, cater to billions of people around the world and any downtime represents serious lost revenue.

There are a number of techniques that websites can use in order to ensure that their services are always available and that their servers stay up and running. Without putting measures in place to handle a high volume of traffic, there is a limit to the number of users that a website can serve simultaneously. If a website experiences in an unexpectedly high volume of traffic, it can become unavailable to anyone.

The Popularity of Facebook

Facebook is one of the most popular websites on the internet. The social media platform has billions of users around the globe and caters to millions of them simultaneously. We all know that Facebook is popular, but the level of its success and depth of its reach still surprises some people. For example, more than 10 million messages are sent between Facebook users, while they collectively click the "Like" button more than 4.5 billion times every single day.

In order to cope with the massive volume of traffic, Facebook has combined existing techniques with their own proprietary technology. This enables them to minimize the load on any individual server while also reducing the amount of data that needs to be sent and received between their users and their servers. This is vital considering how many of Facebook’s users remain connected to the service through their mobile devices throughout the day. Not only does this improve the user experience, but it also enables Facebook to keep the costs of running those servers as low as possible.

Keeping Servers Online

Servers store the content that websites and online services require to run. Whenever you load a website or online service, you are downloading the data required from the web server that hosts it. Data is sent from web servers to users in the form of packets, with individual files being broken down into thousands of tiny packets. This enables different users to download different parts of the file and enables more users to download a single file without causing slowdowns.

But this alone isn’t enough after a certain point. The busiest online servers need to take additional measures in order to ensure that they remain up constantly. For businesses like Facebook, every minute of downtime represents lost revenue and angry users. Online services that gain a reputation for unreliable server uptime will struggle to build their user base.

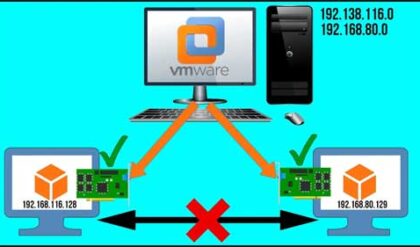

The first line of defense against server downtime is redundancy. This simply means that if a server is unavailable, users will be routed to a failover server instead. Servers are designed to handle multiple simultaneous connections, but the busiest websites require more powerful servers. As with any electronic device, there is the possibility of hardware failure. If a server hard drive breaks without a backup, data can be irretrievably lost.

Balancing the Load

Load balancing is just what it sounds like – ensuring that the computational load required to serve all the connected users is spread across multiple servers. Online services like Facebook handle far too many connections for any single server to cope with. Whenever a server receives a new request, the Domain Name Server rotates through the IP addresses associated with the domain in a circular fashion. This is a fine solution for many websites, but the biggest online services will use their own custom protocols to balance the load.

When you connect to Facebook, your connection is automatically routed to a server that is able to handle the load. If a server becomes overloaded, Facebook will stop sending new connections there. Load balancing used to require physical hardware, which is still sometimes used, but this can now also be done in the cloud.

Load balancing isn’t just about minimizing downtime; it also serves a practical economic function. Consider a business such as Facebook that has lots of servers running around the world. When a server is sitting idle and not handling user requests, it requires very little power. However, as more users connect, the server has to work harder and draw more power. You can see the tendency and make necessary calculations.

Facebook discovered that during quiet periods, the servers consumed more power than when they were idle, as expected. But what wasn’t expected was that the servers under medium load were drawing about as much power as servers under a low load. It is, therefore, more economical for Facebook to have its servers at medium capacity or sitting idle instead of under a low load.

Keeping Servers Safe

A server is a physical object, a machine that can become damaged or broken. If the server is physically damaged or destroyed, it won’t be able to accept incoming connections. This means that an issue at a data center – a power outage, natural disaster or even a problem with the plumbing can mean that vital servers become inaccessible.

Like any computer, a server can get pretty hot when its processor is under a heavy load. On top of the cooling systems built into the servers themselves, the data centers where the servers are housed are kept air-conditioned and cool. In fact, climate control systems also control moisture, preventing the environment from becoming too humid.

Depending on where the data centre is located, it may also need to be designed to take into account the potential for natural disasters. Data centers in California, for example, are housed in structures designed to withstand earthquakes, while the server racks themselves are reinforced and designed so they won’t collapse. The biggest tech firms will have servers located around the globe so an issue in one data center doesn’t affect the whole network.

Protection and Monitoring

A distributed denial of service (DDoS) attack is a type of cyberattack that uses multiple simultaneous connections to overwhelm an internet server and render it inaccessible. Data centers employ engineers to monitor their networks for unusual traffic and respond to any threats. This is in addition to a number of automated methods that are commonly used to prevent DDoS attacks.

However, even with these defenses in place, successful DDoS attacks still occur and can cause serious issues for data centers and their customers. Data centers will, therefore, have procedures in place for responding to DDoS attacks when they are detected.

Servers for the most popular websites and online services today handle thousands of connections. With millions of users accessing services simultaneously, ensuring that servers don’t become overwhelmed requires businesses to have adequate infrastructure in place. Balancing loads intelligently across multiple servers enables websites to use their available resources as efficiently as possible, while redundancies ensure that backups are available when things do go wrong.

Whenever you connect to a website or scroll through your Facebook feed, you are loading content from different servers. To the end-user, everything happens quickly and almost instantly, but behind the scenes, there is a lot more going on than first appears. Without measures in place to properly distribute the load, servers for the biggest websites would quickly become overwhelmed.

Author:

Adam Dubois. I’m a French geek that has long been into tech of all kinds. I’ve gotten into web development back in my teens, after creating my first personal blog. Now my main focus is Proxyway. I share a fascination about privacy enabling tech and seek to create the most comprehensive resource on all things proxy.